Asking Smaller Questions

How GPS, handwriting recognition, and stroke care were retooled around human judgment

I read today that Gladys West passed away last week. Her work in mathematically modeling the Earth was foundationally important to GPS.

GPS is one of those technologies that has faded so completely into the background of modern life that we barely notice it anymore, which is usually how you know something worked. Satellites, atomic clocks, relativistic corrections, all quietly cooperating so that a blue dot appears where you expect it to.

What sparked my thinking, though, isn’t how GPS works. It’s how, as GPS systems moved from open environments into dense urban ones, the limits of the original question were exposed. Previously discarded data became useful once a different question was asked.

That shift wasn’t abstract. It was driven by motivation.

For a long time, GPS asked a technical question: Where am I?

That framing made sense when GPS lived in open environments: aviation, maritime navigation, and highways. Under that framing, messy signals were noise. Multipath reflections, unstable phase information, and urban interference were all extraneous considerations and outside the solution space.

But as applications built atop GPS were used in cities, the motivation changed. People weren’t trying to locate themselves in a vacuum. They were trying to do something concrete: get picked up by a rideshare, meet someone at the right corner, follow walking directions to a café.

Under that motivation, Where am I? stopped being the best or only question.

The more useful question became: Which side of the street am I on?

Sidebar: Why GPS Gets (or Got) Confused in Cities

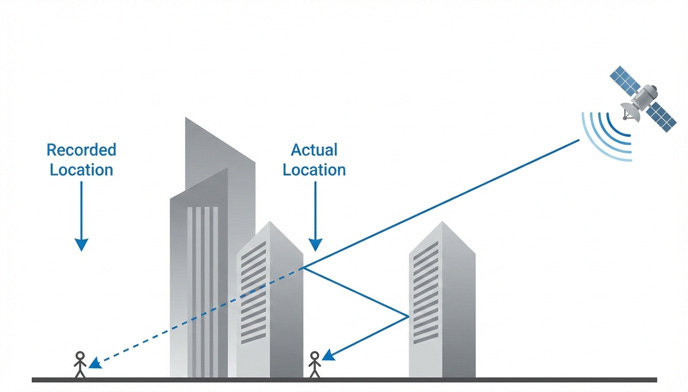

In dense urban environments, GPS gets confused because signals bounce off buildings before reaching the receiver. The system either discards those signals as an error or incorrectly assumes, based on how long the signal took to arrive, that the person is somewhere else.

Under the original question, Where am I?, this behavior makes sense. Reflected signals look like bad data and are treated as such.

But once the question changes, those same distortions become informative. The reflections aren’t random. They differ depending on where the person actually stands - often enough to distinguish between two nearby possibilities, like opposite sides of the same street.

The signal didn’t change. The question did.

Reframing the Problem

That isn’t a more precise version of the original problem. It’s a different problem, shaped by a different human need. And under that question, data that had previously been discarded became useful. Not because it got cleaner, but because it helped distinguish between two actionable options.

This is often described as reframing the problem. That’s accurate, but incomplete.

Reframing is what we call it after the fact. What actually drives these moments is judgment and curiosity. Curiosity about whether discarded data might still be saying something. Judgment about what people actually need in order to act.

Once you see this pattern, it shows up elsewhere.

Palm Pilot vs Newton: Making Handwriting Recognition Reliable

The Newton versus Palm Pilot story is usually told as a lesson in execution. It’s more precise to describe it as a lesson in questions.

The original question, implicitly asked by Apple’s Newton, was: How do we get computers to recognize human handwriting?

That’s an open-ended perception problem. It assumes the burden of intelligence sits entirely with the machine. Apple tried to make the computer smarter, and given the hardware and software constraints of the time, it failed.

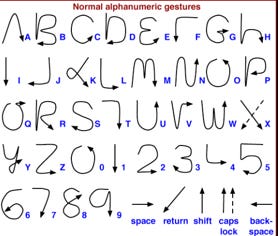

Palm reframed the problem by asking a different question: How do we increase the reliability of a handwriting recognition system?

That shift matters. Reliability doesn’t require perfect understanding. It requires consistency. And consistency can come from either side of the interaction.

Palm recognized that intelligence could be distributed across the system. You could make the computer smarter. Or, you could make the user smarter.

They chose the latter.

By introducing Graffiti, Palm trained users to write in a constrained, machine-friendly way. The alphabet was limited. Ambiguity was removed. Users learned the system quickly, and in return, they got something that worked.

Palm didn’t solve Handwriting Recognition. It solved Reliable Interaction.

Same stylus. Same screen. Different motivation. Smaller question.

Stroke Triage in Emergency Care

Nowhere is the role of motivation clearer than in stroke care.

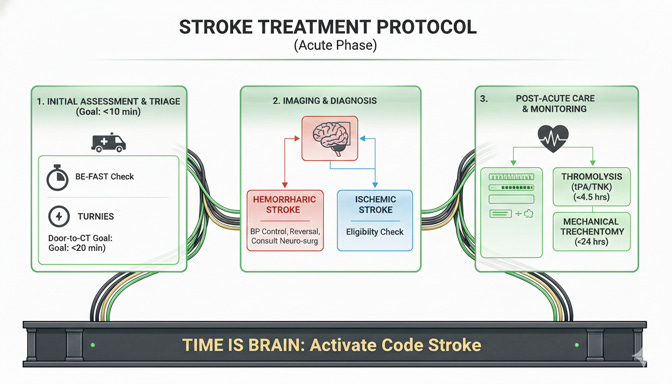

A person presents in an Emergency Room with acute neurologic symptoms. Information is incomplete. Time pressure is extreme. Risk is asymmetric.

The naive technical question is: Is this a stroke?

That question quietly asks the system - the people, the protocols, the handoffs - to produce certainty immediately. It’s extraordinarily hard to answer reliably in real time, and the cost of being wrong is severe.

But the real motivation in the room is different: increase survivability, even at the cost of resources.

Under that motivation, diagnosis is secondary to speed or expense. The useful question becomes: Who needs to be escalated right now?

That reframing collapses the problem into a simple, time-driven flow. Are there stroke-like signs? Is the patient within a treatment window? Should imaging and specialist attention be prioritized immediately?

The output isn’t a diagnosis. It’s urgency.

The system doesn’t replace clinicians. It accelerates judgment. The outcome that matters isn’t epistemic correctness. It’s Time-To-Intervention.

The systems that work best frequently do this. They don’t eliminate human judgment. They make room for it by asking questions that are small enough and concrete enough to inform and compel confident action.

Across GPS, handwriting recognition, and stroke triage, the pattern is consistent.

Motivation comes first. Questions follow.

When systems are built around unchanging, abstract correctness, they struggle in the real world. When they’re built around real human goals, judgment reshapes the question into something smaller and answerable.

That’s the common thread.

In each case, progress came from understanding why the system existed, paying attention to how humans behave inside it, and being willing to let go of the original question.

The breakthroughs didn’t come from knowing more. They came from knowing what changed and what now mattered and then asking questions small enough to help people act.

Want to chat about it? Email me or let’s chat: schedule time on my calendar.

If this kind of systems thinking resonates, you can subscribe below or share this post with someone who’s wrestling with the same tradeoffs